Open-source software vs. the proposed Cyber Resilience Act

By Maarten Aertsen

NLnet Labs is closely following a legislative proposal by the European Commission affecting almost all hardware and software on the European market. The Cyber Resilience Act (CRA) intends to ensure cybersecurity of products with digital elements by laying down requirements and obligations for manufacturers.

The follow-up What I learned in Brussels (feb 2024) explains the status quo at FOSDEM 2024. We are now Tracking CRA implementation (july 2024).

'Commercial' + Critical = Compliance overload?

In this post we will share our understanding of the legislation and its (unintended) negative effects on developers of open-source software. At this point, we have questions and concerns, not answers or solutions.

We feel the current proposal misses a major opportunity. At a high level the 'essential cybersecurity requirements' are not unreasonable, but the compliance overhead can range from tough to impossible for small, or cash-strapped developers. The CRA could bring support to open-source developers maintaining the critical foundations of our digital society. But instead of introducing incentives for integrators or financial support via the CRA, the current proposal will overload small developers with compliance work.

By the end of the piece, you'll know all about 'commercial activities', 'critical products' and the compliance load that the combination causes.

We would love to be wrong about most of our analysis. So if you believe the situation to be less grim than we portray it to be, please talk to me so I can update this overview. However, if you share our concerns, this is what you can do:

- Spread the word. Help your fellow developers. Talk to people around you with legal and policy skills. Let them know how the CRA proposal affects them, their organisation or society at large.

- Read the proposal and the community's comments. This post concentrates on high-level scoping. There is more (updated):

- Read our feedback to the European Commission, jointly submitted with our friends at ISC, CZ.NIC and NetDEF.

- Watch the CRA lightning talks and panel recorded at FOSDEM 2023, with participation by Commission staff.

- Browse OSI's overview of all open source feedback to the Commission.

- Read Bert Hubert's perspective on the lack of standards and audit capacity required, or his post on the alignment of incentives.

- There is also an EC proposal on liability that

worries us, because it mirrors the CRAhas chosen another path in its treatment of open source.

- Get in touch and talk with policy makers at the Commission, your government or your favorite ITRE MEP: the rapporteur is Nicola Danti, while the shadow rapporteurs are Henna Virkkunen,

Eva KailiBeatrice Covassi, Ignazio Corrao and Evžen Tošenovský.

As tech community, we have a shared responsibility to contribute to the quality of policy making that affects us and, through our work, society at large.

We also provide technical expertise to policy-making bodies, including regulators and governments so they have the understanding they need when making public policy decisions related to the Internet infrastructure.

Our understanding of the Act

After creating a (voluntary) certification framework with the EU Cybersecurity Act and then regulating network and information services used to provide essential services with the NIS2 directive, the European Commission (EC) now intends to set mandatory requirements for products with digital elements based on the existing EU framework for product safety and liability legislation.

In the near future, manufacturers of toasters, ice cream makers and (open-source) software will have something in common: to make their products available on the European market, they will need to affirm their compliance with EU product legislation by affixing the CE marking. For our audience, in the remainder of this post when the CRA talks about manufacturers, we will substitute developers (of open-source software) instead.

Essential cybersecurity requirements for products with digital elements

The Cyber Resilience Act includes a set of essential cybersecurity and vulnerability handling requirements for manufacturers (Annex I). It will require products to be accompanied by information and instructions to the user (Annex II). Manufacturers will need to perform risk assessment and produce technical documentation (Annex V) to demonstrate that they meet the essential requirements.

Now let's be honest, hardware and software we all rely on should be designed with security in mind, not ship with known exploitable vulnerabilities and get security updates for a reasonable amount of time. Though the language is very high level, a 'you must be this tall to ride' is definitely in order. But it is the details that matter.

Products, critical products – and consequences

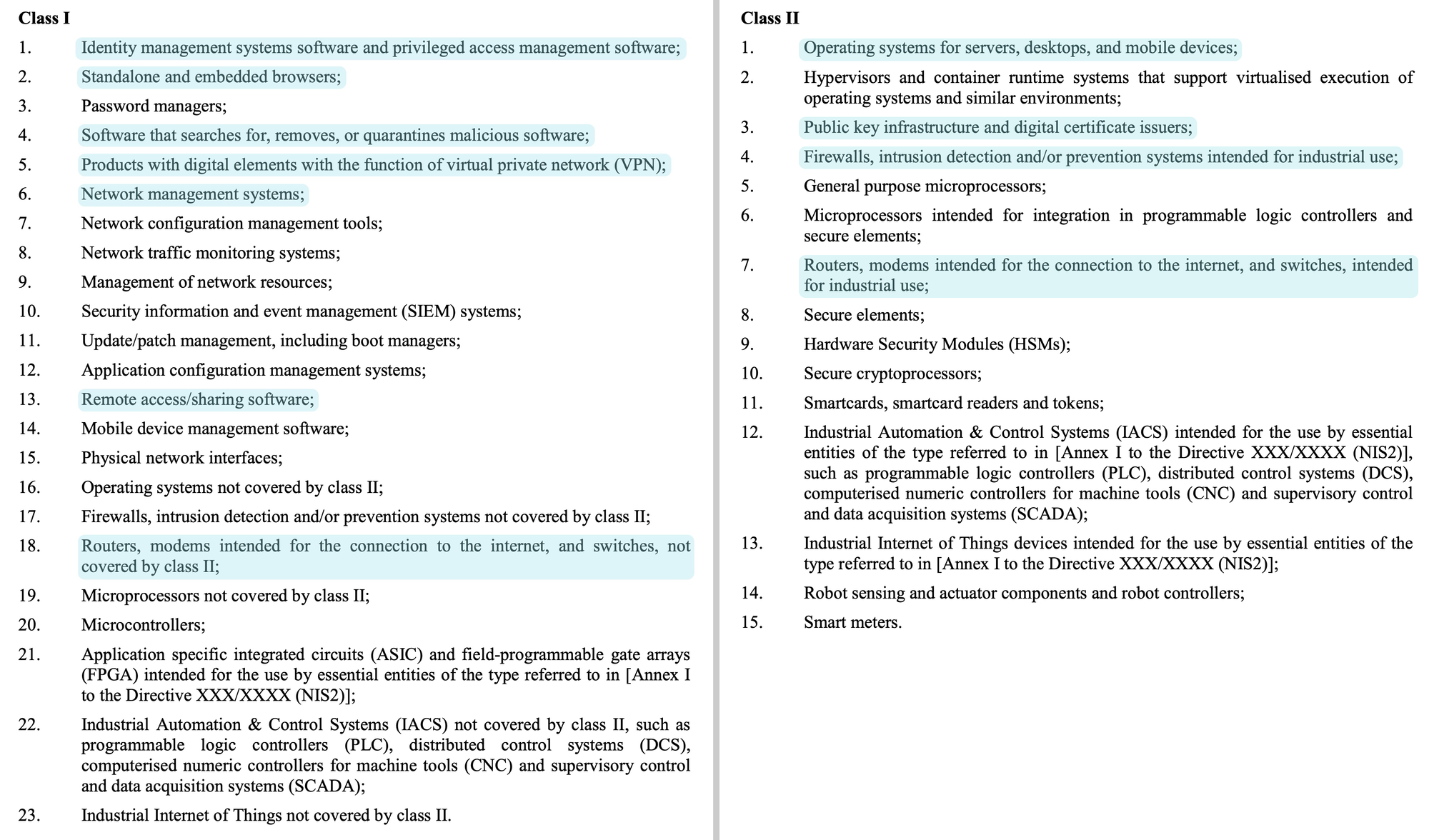

The commission knows that not all products are depended upon equally in our society. The CRA therefore distinguishes between products and critical products 'reflecting the level of cybersecurity risk related to these products'. Annex III contains two categories of critical products, in respectively class I and II. Some highlights from these lists:

These lists of critical product categories are not static. The Commission can add or remove categories in the future based on their level of cybersecurity risk. This risk is determined by the following criteria:

Article 6(2)

a) the cybersecurity-related functionality of the product with digital elements, and whether the product with digital elements has at least one of following attributes:

(i) it is designed to run with elevated privilege or manage privileges;

(ii) it has direct or privileged access to networking or computing resources;

(iii) it is designed to control access to data or operational technology;

(iv) it performs a function critical to trust, in particular security functions such as network control, endpoint security, and network protection.

(b) the intended use in sensitive environments, including in industrial settings or by essential entities of the type referred to in the Annex [Annex I] to the Directive [Directive XXX/XXXX (NIS2)];

(c) the intended use of performing critical or sensitive functions, such as processing of personal data;

(d) the potential extent of an adverse impact, in particular in terms of its intensity and its ability to affect a plurality of persons;

(e) the extent to which the use of products with digital elements has already caused material or non-material loss or disruption or has given rise to significant concerns in relation to the materialisation of an adverse impact.

There are consequences for manufacturers of critical products. To understand them, we need to talk about the compliance side of the CRA.

Demonstrating conformity: how to show that requirements have been met?

Developers have to declare conformity with the requirements under the CRA and thus assume responsibility for compliance. You may have seen a statement of conformity when you bought a washing machine or other consumer product in the EU (if not, see Annex IV for an example). The washing machine software also needs a CE marking, either on said declaration, or its website. How does this work?

Article 6(4)

Critical products with digital elements shall be subject to the conformity assessment procedures referred to in Article 24(2) and (3).

A developer needs to follow one of three possible procedures to assess conformity. They can either:

- perform a self-assessment, in some cases against a technical standard; or

(jargon: internal control, module A); - apply for a product examination by an auditor and then set up checks and balances for their development processes; or

(jargon: EU-type examination by a notified body followed by internal production control, module B and C) - apply for an assessment of their quality system by an auditor. (documented policies, procedures and instructions)

(jargon: full quality assurance, module H)

These consecutive options entail an increasing amount of compliance work. Option 3 may scale more favourably for manufacturers with a large number of different products.

Demonstrating compliance for critical products involves costs for third-party auditors

Conformity assessment is where the consequences of the distinction between products and critical products show up: developers of critical products may not perform self-assessment and need to involve third-party auditors. Only options 2 and 3 are available to them. (Update: If there is a technical standard available that they can apply and their critical product is of class I, self-assessment against that standard becomes available. Here be dragons.)

Having covered some of the preliminaries we will now turn to implications for developers of open-source software.

The effects on developers of open-source software

All categories of critical products include widely used implementations in open-source software

It is quickly apparent that there are open-source projects that create products in almost all of the categories of critical (software) products. Think of Keycloak, Wireguard, BIND, OpenSSH, FreeBSD, Boulder, OpenWRT, BIRD and FRR to name a few based on the list excerpt above.

And, while specific categories—and therefore affected projects—may come or go in the upcoming negotiations on the proposal, the broad reach of the CRA is quite clear.

But wait, isn't there an exception for open-source?

Yes*, but with a very big asterisk. Quoting CRA, recital 10:

In order not to hamper innovation or research, free and open-source software developed or supplied outside the course of a commercial activity should not be covered by this Regulation. [..]

Let's first acknowledge and appreciate that the European Commission created an exception at all. That means we can now argue about the specifics of the chosen exception and its implications and not about the merits of open-source.

Now, what is a commercial activity?

The CRA does not define this term. However, conversations with people more knowledgeable on product legislation pointed me to the EU Blue guide to the implementation of EU product rules:

Commercial activity is understood as providing goods in a business related context. Non-profit organisations may be considered as carrying out commercial activities if they operate in such a context. This can only be appreciated on a case by case basis taking into account the regularity of the supplies, the characteristics of the product, the intentions of the supplier, etc. In principle, occasional supplies by charities or hobbyists should not be considered as taking place in a business related context.

Open-source software is provided both within and outside of business related contexts. And the 'occasional supplies' exception in this quote seems to be of limited use to projects society comes to depend on. Would you consider an open-source operating system (MINIX) that has been freely available for 35 years an 'occasional supply'? What does its integration in all Intel processors since 2015 mean for being 'goods' outside a 'business related context'? How about the BIND project, a staple of open-source core Internet infrastructure shipping for 40 years?

A distinction between open-source development with no income, some income and full income?

Having read this, can you now judge whether the open-source software you rely on is developed in the course of a commercial activity? We definitely feel unqualified to make that distinction. Yet, it is critical to understand whether or not the CRA applies to your project.

In addition to uncertainty for developers, there may be legal uncertainty about the boundaries of the term 'commercial activity' in the context of the supply of open-source software. It is not reasonable to expect the open-source movement to go through the courts to create the legal precedents necessary to clear this up.

Charging for support makes open-source a commercial activity

There is ample supply of un- and undermaintained software. That is also true for open-source software, including for projects that many rely on (popular example: OpenSSL in 2014). The wider software is depended upon, the higher the demands and expectations that are placed on its developers. To meet expectations, it is not uncommon for developers to seek ways to work on such projects full-time.

So should the uncertainty surrounding 'commercial activities' in the CRA discourage volunteers from earning their living and work on open-source software full-time? We think it would be in society's best interest to have them work on their code.

One common way for open-source developers to create the necessary income to do so is to accept employment at an organisation that uses their software, work freelance on paid features, or sell their time spent on providing support.

Back to the CRA. Recital 10 continues with some examples of commercial activities:

In the context of software, a commercial activity might be characterized not only by charging a price for a product, but also by charging a price for technical support services, by providing a software platform through which the manufacturer monetises other services, or by the use of personal data for reasons other than exclusively for improving the security, compatibility or interoperability of the software.

The wording in Recital 10 on technical support services puts the practice of providing paid support firmly in scope for the CRA. In turn, this may reduce the attractiveness for developers to ask for contributions to the continued stability and availability of open-source software through paid support contracts.

Due diligence and improvement incentives when an open-source product is used as a component

An open-source product can be used as building blocks of other products. For example, our free and open-source DNS resolver (product) Unbound is a component in commercial appliances offered by Infoblox (another product). The CRA has implications for such integrations:

Article 10(4)

4. For the purposes of complying with the obligation laid down in paragraph 1, manufacturers shall exercise due diligence when integrating components sourced from third parties in products with digital elements. They shall ensure that such components do not compromise the security of the product with digital elements.

This seems like a promising mechanism to encourage contributions to open-source projects that are used as a component. In our current practice, Infoblox relies on Unbound in some products, but it also financially supports its development.

Unfortunately, the current proposal does not go beyond due diligence. Sandboxing a component by decreasing its ability to contribute to a compromise of the security of a product may be one way to meet the stated requirement. Such an approach may be very worthwhile and effective from a security perspective, but it would not benefit the security of the component itself (and its use elsewhere). Neither does it encourage an integrating manufacturer to help the open-source developer meet the obligations of the CRA on their own. This is especially relevant if the component happens to (also) be a (stand-alone) product.

Business process audits are a very poor match for open-source projects not run by businesses

Recall that self-assessment is not available to critical products. We have three concerns with the other two available options involving third party audits:

- Audits (and auditors) are very much geared towards businesses and business processes, which do not align well with how many open-source projects operate.

- Legal, compliance and auditing are skill-sets not necessarily present in groups of open-source developers who are otherwise well equipped to develop secure software.

- Audits can be very expensive. Compliance costs can be prohibitive for projects that (partially) rely on volunteers and/or are (partially) sustained by donations, paid features or in-kind support from the users of their software.

The Commission appears to have considered the costs angle and instructs auditors to consider price reductions in some cases:

Article 24(5)

Notified bodies shall take into account the specific interests and needs of small and medium sized enterprises (SMEs) when setting the fees for conformity assessment procedures and reduce those fees proportionately to their specific interests and needs.

Note that this talks about SMEs, whereas many open-source projects are more of a social construct than a formal legal entity. How should an auditor set fees for the work of a group of individuals, some of which may have income from contributing to development of a joint project? What is also unclear is where the auditor should recoup their losses from charging less. After all, some open-source projects are at criticality and complexity levels surpassing many commercial products on the market.

Compliance overload?

We are concerned about a compliance overload for individual and groups of developers maintaining the 'critical products' that our society already depends on, while they try making their living off of it.

There is a significant imbalance between use and (financial) support of open-source software. Quoting statistics we provided for a recent BITAG report on routing security:

Out of an estimated 2,000 installations of the Routinator Relying Party software and 1,400 networks using the Krill delegated Certificate Authority software, fewer than ten fund their development.

Wouldn't that get us further from the CRA's stated goal of addressing a major problem for society: 'a low level of cybersecurity of products with digital elements'?

Will the CRA encourage developers to create more secure software,

will it impose compliance costs that detract them from doing so?

Many open-source projects will not be scared of the essential security requirements or the vulnerability handling requirements. Some actually originated in the open-source community. Others are widely considered to be best practices.

We feel the current proposal misses a major opportunity. At a high level the 'essential cybersecurity requirements' are not unreasonable, but the compliance overhead can be tough to impossible for small or cash-strapped developers. The CRA could bring support to open-source developers maintaining the critical foundations of our digital society. But, instead of introducing incentives for integrators or financial support via the CRA, the current proposal will overload small developers with compliance work.