The Ongoing Story of OpenINTEL: Measuring the DNS for Research, Policy and Protocol Improvements

Measuring the DNS for Research, Policy and Protocol Improvements.

By Roland van Rijswijk-Deij

On November 28th, the OpenINTEL project received recognition for its groundbreaking work on measuring the Domain Name System from the Dutch national science councils in the form of the Research Data Netherlands Prize. We are very proud that our effort to measure the state of the DNS on a daily basis received this recognition. OpenINTEL has made new research possible, helped inform public policy and has been used to measure and inform Internet protocol improvements. In this blog we tell the story of how all of this got started, what OpenINTEL has been used for so far, and what our plans for the future are.

How we got started

Like many projects, OpenINTEL started with a simple question. While working on research into denial-of-service attacks, we accidentally discovered that owners of so-called Booter websites (that offer “DDoS-as-a-Service”) protect themselves against attacks using cloud-based DDoS protection services. This sparked the question: how widespread is the use of these DDoS protection services? As many of these services work through the DNS, we wanted to start tracking the state of the DNS. And then one thing led to another: we quickly realised that what is in the DNS says a lot about how the Internet is used. This is how the idea behind OpenINTEL was born: we wanted to design and implement a measurement system that could track the state of the DNS for all domains in a top-level domain every day.

A Brief History of OpenINTEL

Once we had decided what we wanted to do, we set out to design and implement the system. Some choices were made early on. We wanted to use standard DNS tooling, and chose to use LDNS and Unbound from NLnet Labs. We also wanted to make our data easy to analyse, and based on the favourable experiences of our project partner SIDN Labs, we chose to design for the Hadoop big data ecosystem.

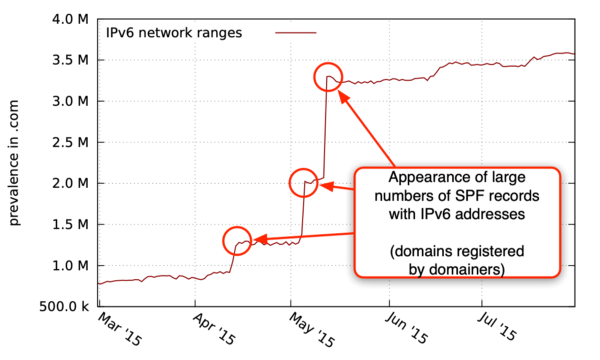

In the summer of 2014, we started writing and testing our code, and started collecting DNS zone files for top-level domains. At that time, this was limited to the .com, .net and .org TLDs. In early 2015, we had working code that would stably and reliable measure these three TLDs well within a 24 hour period. With the help of our project partner SURFnet, we then set up a private cloud environment based on OpenStack to run our measurements from. On the 21st of February 2015 we celebrated our first full day of measurements, and after some final tweaks, we started our regular measurements on March 1st, 2015.

Soon we started accumulating vast amounts of data, and we had one small problem: we had no Hadoop cluster to analyse it on yet. With the help of some ingeniously parallelized code, we managed to analyse our first six months of data, crunching for two days on a 40-core machine, all so we could present our project to the world at the ACM SIGCOMM Conference 2015 in London (see the poster here). While we had shown we could even analyse our data without a big cluster, we realised we would soon need more power.

In September of 2015, the University of Twente, SIDN and SURFnet joined forces to fund a dedicated Hadoop cluster for OpenINTEL. With the help of SIDN’s experience in running such a cluster, we set it up and repeated the analysis we had done in the Summer, to find that what took two days for six months of data now took less than 45 minutes for one year of data, a vast improvement!

In 2016 we significantly expanded our coverage, adding additional generic TLDs, and also adding our first country-code TLDs. Over 2017, we further expanded our coverage, and added a daily infrastructure measurement, to also cover parts of the infrastructure behind the DNS and to measure new protocols to secure e-mail communication. By 2018, we now cover all generic TLDs. Also, in 2018, a fourth partner, NLnet Labs, joined our project. Given that we already extensively use their open source software, this is a welcome addition to the team.

Research Highlights

Over the past 3.5 years, data from OpenINTEL has been used for well over 20 academic publications, and it would take multiple blogposts to discuss all of them. To give you some idea of what our data has been, and will be used for, we highlight three publications and one research project.

In 2016, we used OpenINTEL data to answer the very first question that sparked setting up OpenINTEL in the first place: how pervasive is the use of DDoS Protection Services (DPS)? In a paper led by OpenINTEL team member Mattijs Jonker, entitled: “Measuring the Adoption of DDoS Protection Services”, we use 1.5 years of data to not only show that the use of DPSes grows, we also show how DPSes are used. In particular, we show short-term on/off use of DPSes, offset against long-term “always on” use of DPSes. The paper was presented at the ACM SIGCOMM Internet Measurement Conference 2016 in Santa Monica, California. At the same conference, OpenINTEL was presented to the Internet measurement community in the “works-in-progress” session.

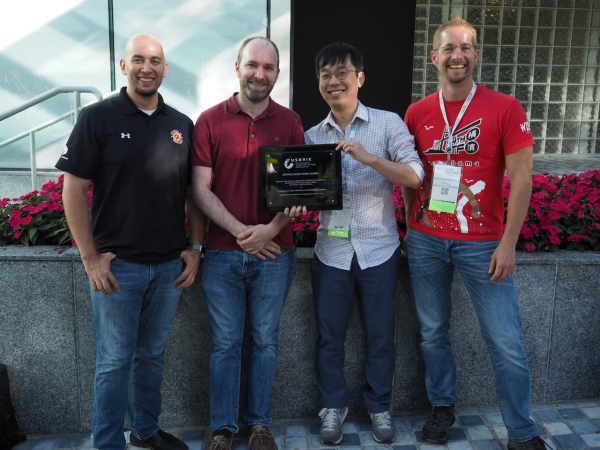

Our presentations at IMC 2016 led to the first large international collaboration with OpenINTEL data. Team member Roland van Rijswijk-Deij collaborated in a team led by then Northeastern University researcher Taejoong Chung (now with RIT) to perform a comprehensive longitudinal of the DNSSEC ecosystem. This paper, which was presented at the USENIX Security Symposium 2017 in Vancouver, Canada, used 21 months of OpenINTEL data to show how DNSSEC is deployed in the .com, .net and .org domains, and what common mistakes are made during deployment of this security technology. The paper was well received at the conference, and was awarded “Distinguished Paper” at the conference.

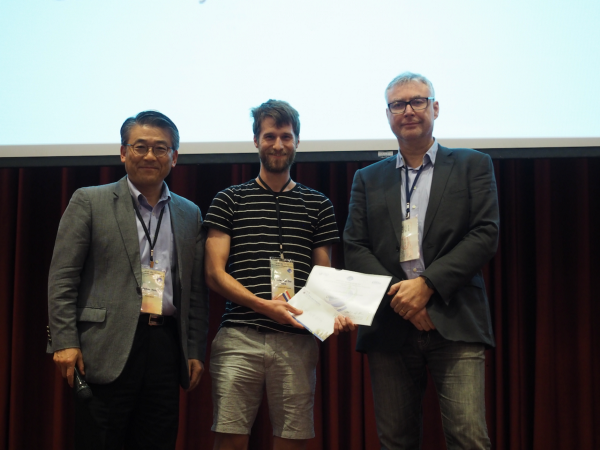

Over the course of 2017, we collaborated with our project partner SURFnet to study if we could use OpenINTEL data for pro-active security purposes. In particular, we wanted to test if we could use data from OpenINTEL to combat a notoriously difficult to block type of spam e-mail called “snowshoe spam”. This type of spam operation relies on spreading the load of sending spam over many different IP addresses and domains, thus leaving a less obvious footprint (akin to how snowshoes spread the weight of a person to avoid sinking into the snow). This makes it difficult to use traditional blacklisting approaches to block this type of spam. OpenINTEL team member Olivier van der Toorn developed a methodology based on machine learning to predict which domains found in the OpenINTEL dataset are configured for this type of spam. He successfully tested his method in the SURFmailfilter services that SURFnet runs for academic institutions in The Netherlands, showing that he could reliably detect and help filter out snowshoe spam. His method found this type of spam operation up to 180 days before the domains finally made it to “classic” blacklists. Olivier presented his paper at the NOMS 2018 conference in Taipei, Taiwan, and received the best paper award for his contribution.

Finally, in 2018, OpenINTEL team member Anna Sperotto received confirmation that the MADDVIPR project, of which she is the principal investigator, will be funded. This project is a Netherlands/US collaboration between the University of Twente and CAIDA at the University of California San Diego. The project, set to commence in late 2018, will study the stability and resilience of the DNS against denial of service attacks, and will make extensive use of OpenINTEL data to map vulnerabilities in the DNS. Anna also supervises the Ph.D. project working on this project. As part of the international collaboration, significant parts of the OpenINTEL dataset will also be made available at CAIDA in the US, further extending access for other researchers.

Plans for the Future

As time passes, the value of the data collected by OpenINTEL only increases, as we can paint a more detailed picture of developments over time. This does not mean, however, that OpenINTEL isn’t gaining any new functionality. On the contrary, OpenINTEL is constantly being improved, and visitors to our website will see that new features are added to our measurements regularly. To end this blog, we want to look forward to ongoing developments that we expect to come to fruition over the next couple of months.

First of all, we are excited to be working on a complete overhaul of our measurement infrastructure. After four years of non-stop operation, our measurement machines are due to be replaced, and we are in the process of building an entirely new measurement cluster that will take over our measurements in early 2019. Our old hardware will then find a new place as a test bed for other research projects. As part of the overhaul, we are also implementing changes to our measurement software that will allow us to measure round-trip times for every individual query we perform, and will also allow us to register accurate time-to-live values of all the DNS records we collect. This will gives us new visibility into potential stability issues and vulnerabilities to, e.g., hijacking in the DNS, and is data that will be used in, for example, the MADDVIPR project.

Another exciting direction we plan to explore in 2019 is to use OpenINTEL data for improving the open source software of our newest project partner NLnet Labs. Their DNS software is used throughout the core of the Internet. Using OpenINTEL data, we hope to further improve their software and with it the core of the Internet.

Acknowledgements

OpenINTEL would not have been possible without the help of many people and organisations. In particular, we would like to acknowledge the ongoing funding support of our project partners: SIDN, SURFnet, NLnet Labs and the University of Twente.

Further Reading

If you want to learn more about OpenINTEL, go to our website, and for the more academically inclined, we also suggest that you read our paper on the design of OpenINTEL.